Optical imaging and characterization of weakly scattering phase objects, such as isolated cells, bacteria and thin tissue sections frequently used in biological research and medical applications, have been of significant interest for decades. Due to their optical properties, when these ‘phase objects’ are illuminated with a light source, the amount of scattered light is usually much less than the light directly passing through the specimen, resulting in a poor image contrast using traditional imaging methods. This low image contrast can be overcome using, for example, chemical stains or fluorescent tags. However, these external labeling or staining methods are often tedious, costly and involve toxic chemicals.

Quantitative phase imaging (QPI) has emerged as a powerful label-free approach for optical examination and sensing of various weakly scattering, transparent phase objects. The past few decades have witnessed the development of numerous digital methods for quantitative phase imaging based on image reconstruction algorithms running on computers to recover the object’s phase image from various interferometric measurements. These digital QPI techniques, powered by graphics processing units (GPUs), have been used in different applications, including pathology, cell biology, immunology, and cancer research, among others.

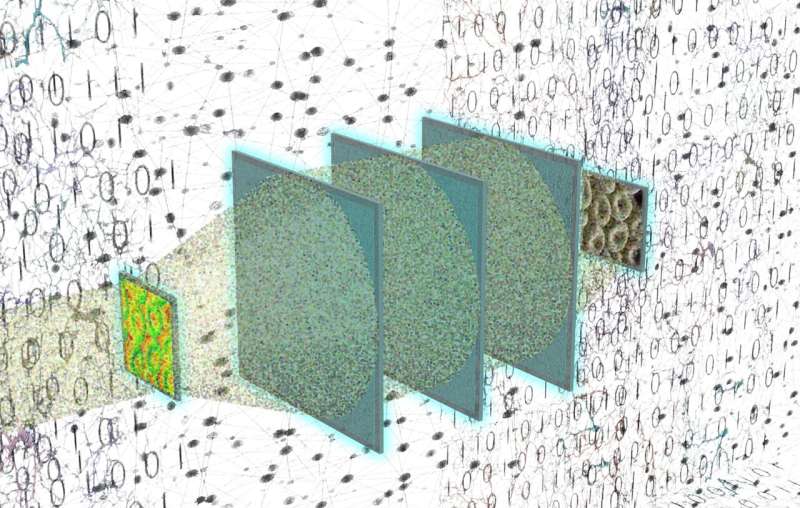

In a new research paper published in Advanced Optical Materials, a team of optical engineers, led by Professor Aydogan Ozcan from the Electrical and Computer Engineering Department and California NanoSystems Institute (CNSI) at the University of California, Los Angeles (UCLA), developed a diffractive optical network to replace digital image reconstruction algorithms used in QPI systems with a series of passive optical surfaces that are spatially engineered using deep learning. Unlike the conventional QPI systems, where the phase recovery step is performed on a digital computer using an intensity measurement or a hologram, a diffractive QPI network directly processes the optical waves generated by the object itself to retrieve the phase information of the specimen as the light propagates through the diffractive network. Therefore, the entire phase recovery and quantitative phase imaging processes are completed at the speed of light and without the need for an external power source, except for the illumination light. After the light interacts with the object of interest and propagates through the spatially engineered passive layers, the recovered phase image of the sample appears at the output of the diffractive network as an intensity image, successfully converting the phase features of the object at the input into an intensity image at the output.

These results constitute the first all-optical phase retrieval and phase-to-intensity transformation achieved through diffraction. According to the results presented by the UCLA team, the diffractive QPI networks trained using deep learning can not only generalize to unseen, new phase objects that statistically resemble the training images, but also generalize to entirely new types of objects with different spatial features. In addition, these diffractive QPI networks are designed so that the quantification of the input phase is invariant to possible changes in the illumination light intensity or the detection efficiency of the image sensor. The team also showed that the diffractive QPI networks could be optimized to maintain their quantitative phase image quality even under mechanical misalignments of its diffractive layers.

The diffractive QPI networks reported by the UCLA team represent a new phase imaging concept that, in addition to its superior computational speed, completes the phase recovery process as the light passes through thin, passive diffractive surfaces, and therefore eliminates the power consumption and memory usage required in digital QPI systems, potentially paving the way for various new applications in microscopy and sensing.

You may like to read:

Study reveals corrosion of magnesium alloys in marine atmospheric environment

Developing an efficient production technique for a novel green fertilizer